Categories

Archives

Recently IPTC has been working with many organisations who are creating solutions for the ongoing problem of misinformation and disinformation in news. We are happy to announce that this work continues through IPTC’s liaison relationship with C2PA, the Coalition for Content Provenance and Authenticity.

C2PA was created to unify the efforts of the Adobe-led Content Authenticity Initiative (CAI) which focuses on systems to provide context and history for digital media, and Project Origin, a Microsoft- and BBC-led initiative that tackles disinformation in the digital news ecosystem. C2PA creates technical standards for certifying the source and history (or provenance) of media content.

The IPTC has been working with both the Content Authenticity Initiative and Project Origin in recent years. Andy Parsons from CAI presented at the IPTC Photo Metadata Conference in 2020. IPTC members who are also members of CAI and/or Project Origin include Adobe, BBC, CBC/Radio Canada and The New York Times.

IPTC and C2PA have agreed to share information and allow each organisation to attend the other’s meetings in the areas of technical specifications of file formats, particularly around image and video files; to share knowledge and expertise around newsroom practices and workflows; and to collaborate in the areas of content syndication and distribution.

The IPTC Photo Metadata Standard is widely used by photographers, photo agencies and other photo suppliers around the world. To help photo people use it properly, IPTC has a specification document with a lot of details in document form.

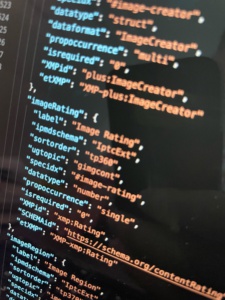

Now, we have released a machine-readable version of the spec that can be consumed directly by software tools.

We call it the IPTC Photo Metadata TechReference. (See below for direct links to the data files.)

The TechReference is a data object containing all the details of the IPTC Photo Metadata technical specifications in the easy-to-use JSON and YAML formats.

The file covers all IPTC properties and structures.

For each property, we specify:

- the property’s formal name

- corresponding identifiers in the ISO XMP and the IPTC IIM formats, if applicable;

- the property’s datatype, such as string, number or a custom property structure like Location; and

- the property identifier that can be used with ExifTool to read or write the metadata property (such as “XMP-dc:creator” for XMP or “IPTC:Creator” for IIM);

- … and a few more details.

We have also published rich documentation about the TechReference data object on the IPTC website. The data objects themselves can be downloaded from the IPTC site by both IPTC members and other interested parties.

Links:

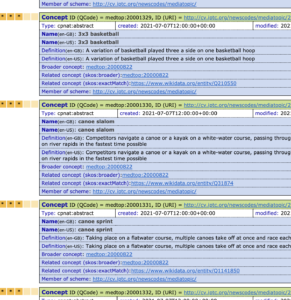

In time for the 2020 Summer Olympics, soon to be held in Tokyo Japan (in 2021), we have released a new version of the Media Topics vocabulary covering all Olympic sports.

As many MediaTopics users don’t use the sports facets system, we wanted to make sure that the top-level Olympic and Paralympic sports were all represented in the main Media Topics vocabulary.

To make this possible, we have made the following changes:

New and changed labels and definitions for Olympics and Paralympics

We have added the following new sport concepts, all under Competition Discipline:

- medtop:20001329 3×3 basketball

- medtop:20001330 canoe slalom

- medtop:20001331 canoe sprint

- medtop:20001332 bmx freesytle

- medtop:20001333 road cycling

- medtop:20001334 track cycling

- medtop:20001335 football 5-a-side

- medtop:20001336 goalball

Modified labels:

- medtop:20001093 weightlifting -> weightlifting and powerlifting

- medtop:20000895 bmx -> bmx racing

We have “unretired” the following term, which was retired in 2017:

- medtop:20001077 marathon swimming

We have moved the following term:

- medtop:20000887 sport climbing to under “competition discipline”

Updated translations

In another major update we have added labels in French, Spanish and Arabic for most recently-added terms. Thanks to Anne Raynaud and her team at Agence France-Presse (AFP) for this contribution.

Another small change is that in the HTML tree view, we now mark retired concepts more clearly by visually striking out their labels and definitions.

We always welcome feedback on IPTC MediaTopics and the other NewsCodes vocabularies on the public discussion list iptc-newscodes@groups.io.

IPTC’s Video Metadata Working Group is happy to announce that the first version of the IPTC Video Metadata Hub Generator tool has been released. It can be used to create IPTC Video Metadata Hub records without any knowledge of the underlying technical metadata schema.

The Video Metadata Hub tool serves as a demonstrator to show how easy it could be to enter metadata for a video using the Video Metadata Hub common video metadata schema. It illustrates the power of Video Metadata Hub to video architects, digital asset managers and developers of video software and systems.

How do I use the Video Metadata Hub Generator?

To use the tool, simply start typing text into fields in the form on the left hand side of the screen. The right-hand side will automatically update showing a JSON version of the VMHub data according to the IPTC Video Metadata Hub JSON schema.

Because one of the features of IPTC Video Metadata Hub is its rich set of mappings to other well-known video formats, we will be adding other output formats such as XML (NewsML-G2), EBUCore, XMP and EIDR.

What can I do with the output?

The resulting JSON file can be used to supply data to IT systems. Alternatively, the generated JSON file can be saved alongside your video assets as a “sidecar”. This usage is explained in the section of the IPTC Video Metadata Hub User Guide called “Using Video Metadata Hub with your video content”.

In the future, we hope that Video Metadata Hub properties will be built into many video editing tools and digital asset management systems, along with a common way of storing the metadata properties embedded into video files. When this has happened, users will be able to fill out standardised metadata fields in one tool and then view the entered metadata when loading that video file into another tool.

The current version of the Video Metadata Hub Generator shows only a small subset of the 91 Video Metadata Hub fields. In the future, we aim to add a control that lets users specify their use case (for example “video archives” or “news agency”) and all of the relevant fields for that use case would be displayed.

For more information, see the IPTC Video Metadata Hub pages on iptc.org or the Video Metadata Hub User Guide.

We are very interested in feedback from users. Join the conversation about this tool on the public iptc-videometadata email discussion group.